Michael Joe Cini

21st April 2021

AI Tool Detects Skin Cancer Using Smartphones

AI tool developed by researchers at MIT to identify suspicious skin lesions using smartphone cameras

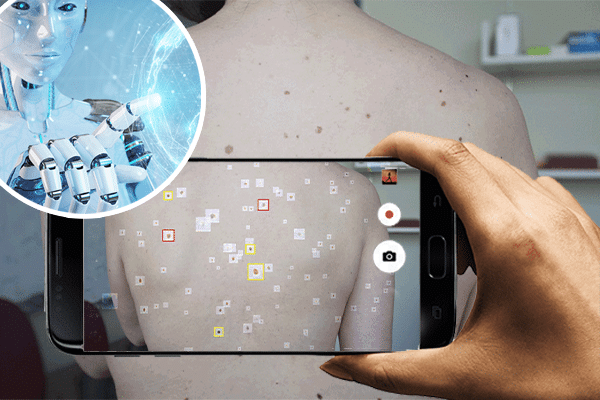

Researchers at MIT and other institutions around Boston have developed a tool using Artificial Intelligence (AI) called deep convolutional neural networks (DCNNs) to identify suspicious skin lesions using the camera on smartphones.

Melanoma encompasses over 70% of all skin cancers and early diagnosis is essential given the pigment producing cells (melanocytes) multiply uncontrollably.

In bid to mitigate complications and inaccuracy surrounding typical skin cancer diagnosis through visual inspection of Suspicious Pigmented Lesions (SPLs), researchers have developed a new deep learning tool that uses photographs taken on a smartphone to identify SPLs.

The AI-backed tool was tested on over 20,000 images taken from 133 patients from publicly available data bases. Imperatively, the researchers wanted to ensure the tool would work in real-life and hence used pictures taken using smartphone cameras.

It is reported that the tool showed more than 90.3% sensitivity and 89.9% specificity in distinguishing SPLs from nonsuspicious lesions, complex backgrounds and skin.

In a research paper published in the Science Translational Medicine, Luis R. Soenksen (the first author on the paper) shared:

Our research suggests that systems leveraging computer vision and deep neural networks, quantifying such common signs, can achieve comparable accuracy to expert dermatologists,

We hope our research revitalizes the desire to deliver more efficient dermatological screenings in primary care settings to drive adequate referrals.

Source: Medgadget